|

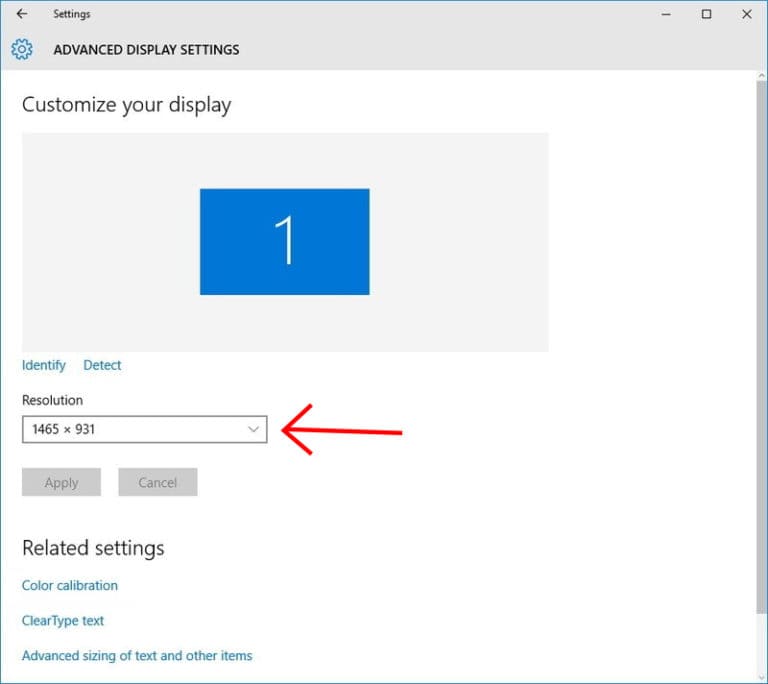

So what resolution should I use when I scan?īy default, most scanners on campus, when set to Screen in the TWAIN software, will set the resolution to somewhere around 72 or 75. If the screen were set to 640x480 pixels, that same 300x500 image would be taller than the entire screen and you would have to scroll down to see the bottom of it. Since the screen can only display 800圆00 pixels, a 300x500 image will take up almost half the width and almost all of the height. Let's assume the screen is set to 800圆00 pixels. You can choose from dimensions such as 640x480 pixels or 800圆00 pixels. The resolution is set in the Monitor (or Display) Control Panel by choosing screen dimensions.

For example, scanning the same 3x5 inch photograph at 200 pixels per inch the resulting image becomes 600x1000 pixels because each inch of the picture is represented by 200 pixels.Ī computer screen has its own resolution. Scanning an image at a higher resolution will result in a larger image. When scanning a 3x5 inch photograph at 100 pixels per inch the resulting image becomes 300x500 pixels because each inch is represented by 100 separate pixels. This specifies how many pixels represent a linear inch of an image or monitor screen. A screen and an image can both be measured in pixels.

The grid makes up whatever image you are looking at. Pixels are simply blocks of color arranged in a grid. When working with image and screen resolution we talk about pixels.

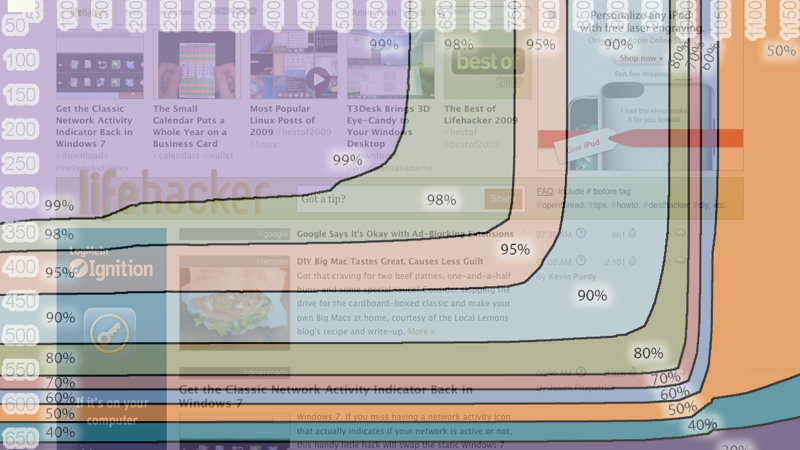

Although very small images are often seen as "fuzzy" when viewed at a larger scale, the difference in quality as the images get larger is hardly noticeable when they're viewed at their intended sizes. Larger images may look clearer, but there is a trade-off in screen real estate. If your resolution is higher, the image dimensions will be larger. For screen images, resolution simply means size. My advice to you is to either stick with 1360x768, which is the absolute best your TV can do based on the laws of physics, or get a monitor or TV that can support a higher resolution.For images that are being displayed on the screen, higher resolution does not equate to better clarity as it does with printed images. Things start to look worse, not better, as the resolution increases beyond what the hardware can support. Now imagine that with a face in a crowd, or the detail of a photo. You could, however, display a "T" easily with 9 pixels (three black across the top row, one black in the middle position in the second row, and one black in the middle position in the third row).Īs you increase the resolution, items on the screen get smaller, so the "T" would eventually get so small that there would only be 4 pixels trying to display it, resulting in an unreadable letter.

In any case, the "T" would not look like a "T", and would instead look like a dash or a square or an upside down "L". You would either wind up with two black on top and two white on bottom, or four black, or three black and one white. Imagine trying to display a picture of the letter "T" with only 4 pixels. Your video card (great card, card is not an issue) can support much higher resolutions, but has detected the highest resolution of your TV and therefore limited the settings to 1360x768, which is the best resolution your TV can display perfectly.Īnything higher would NOT make the picture better (since the number of pixels is limited by the TV hardware / screen) but it could (and would) make it worse since a resolution that is not an exact multiple of the hardware resolution would force a conversion that would sacrifice clarity for completeness. The highest resolution your TV supports is 720p (1360x768). The answer is fairly simple, but not always easy to explain.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed